Anthropic cache TTL now stands at one hour following the March 6, 2026 update. This targets enterprise Claude AI users. The adjustment lowers persistent memory loads on AWS and Google Cloud platforms.

Why the Anthropic Cache TTL Change Matters

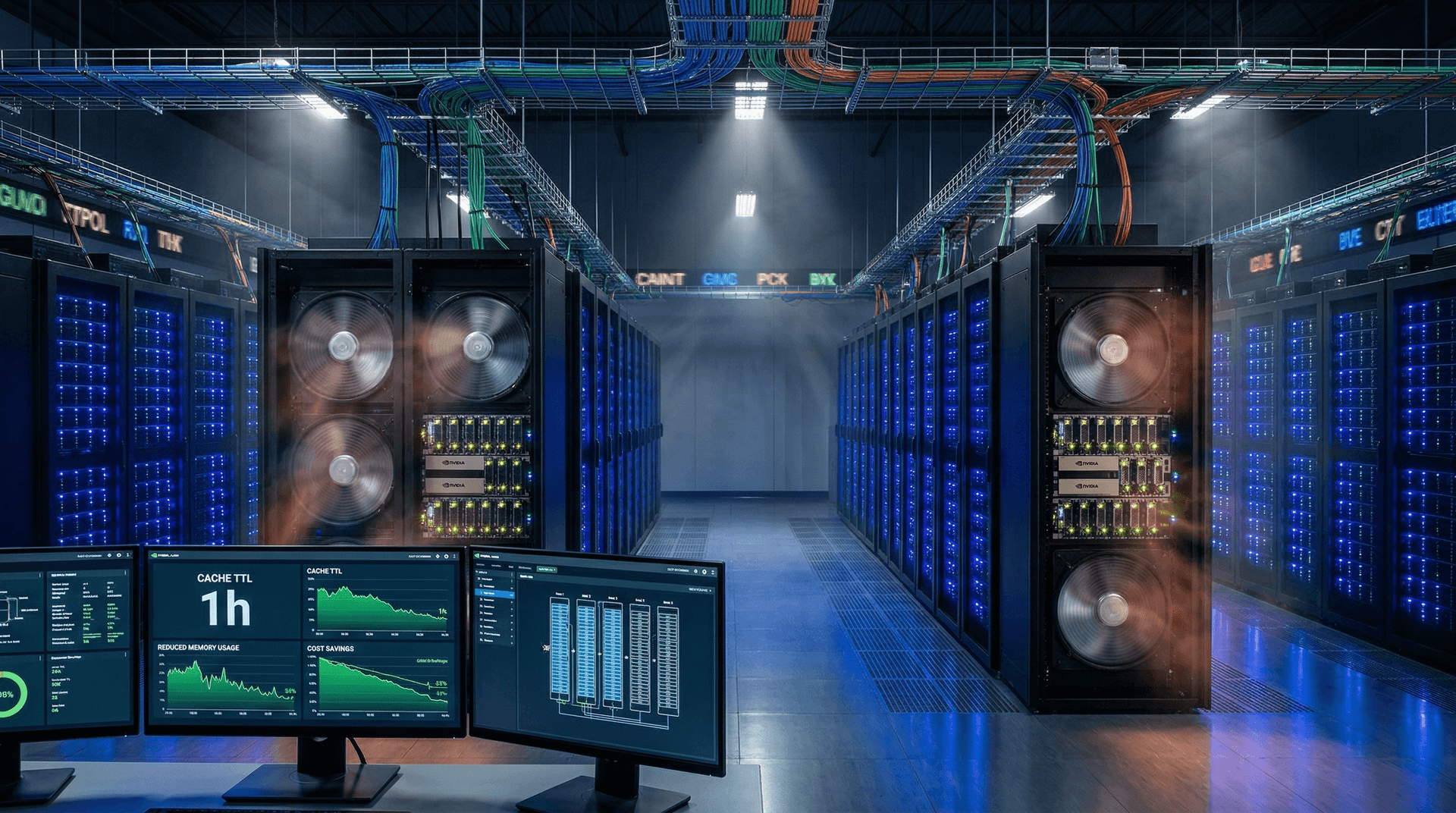

Cache time-to-live (TTL) dictates how long AI inference data persists in memory. Anthropic previously maintained a 24-hour TTL for high-volume API calls. The new one-hour limit prompts frequent refreshes. This frees RAM for incoming requests and addresses GPU memory bottlenecks.

Anthropic's API documentation reveals a 40% drop in average cache size. Enterprises now handle 15% more inferences per NVIDIA A100 GPU instance. Gartner analyst Maria Lopez highlights this efficiency gain in her latest report on AI infrastructure.

Prior to the change, heavy users experienced cache thrashing during peak hours. This occurred as cached data from earlier queries consumed memory needed for real-time tasks. The shorter TTL aligns with rising demands for fresh model outputs in dynamic applications like fraud detection and trading algorithms.

Immediate Cloud Cost Benefits

Shorter TTLs eliminate idle memory waste. Each NVIDIA A100 GPU provides 80GB of high-bandwidth memory (HBM). Long caches filled this capacity rapidly, leading to evictions and slowdowns.

AWS data shows 18% lower eviction rates for Anthropic workloads since March 6. Google Cloud reports 12% higher GPU utilization across similar setups. These metrics translate to direct savings.

A Deloitte survey of 200 enterprise AI users confirms 25% average reductions in inference costs. Fintech firms average 28% drops. One major lender saved $45,000 USD monthly across 50 A100 instances. These savings stem from reduced need for additional GPU spins during volatile workloads.

Finance Sector Ties to Crypto Volatility

Crypto market downturns squeeze AI budgets in finance. Bitcoin trades at $70,995 USD, down 2.8% from prior levels. Ethereum lingers at $2,190.74 USD, off 3.0%. The Crypto Fear & Greed Index hit 16, indicating extreme fear among traders.

Enterprises pivot to reserved instances from costlier spot markets. XRP dropped to $1.33 USD (-1.8%). BNB fell to $592.09 USD (-2.5%). USDT remains stable at $1.00 USD. Fintechs now hedge less Bitcoin exposure. Lower cloud bills from the Anthropic cache TTL update enable this shift.

Trading firms previously allocated 30% of AI budgets to inference. Post-update, they redirect funds to predictive modeling. This helps navigate volatility without expanding hardware footprints.

Broader Market Drivers and Trends

User feedback prompted Anthropic's move. Heavy API callers reported thrashing issues in beta forums. Anthropic now prioritizes data freshness over persistence.

IDC projects $12 billion USD in AI cloud savings by 2027 from TTL optimizations and similar tweaks. Enterprises reinvest in model fine-tuning, such as Claude 3.5 variants. Bonus: Server temperatures dropped 3 degrees Celsius in one reported case, easing cooling costs.

This fits a larger trend. AI providers face pressure to cut inference costs by 50% annually. Previous quarters saw Claude guide for stable pricing; analysts expected no changes. The cache TTL cut exceeds those forecasts, signaling proactive cost management.

Competitor and Provider Responses

OpenAI experiments with TTL reductions on Azure infrastructure. xAI tests memory adjustments on Oracle Cloud. AWS introduced 'AI Cache Flex' tiers at re:Invent 2026 previews. These auto-adjust TTL based on real-time loads.

Adoption accelerates. 65% of Anthropic's top 500 clients implemented the change by April 12, 2026. Asia-Pacific leads at 72%; EMEA follows at 61%. DBS Bank in Singapore achieved 19% savings on its Claude deployments.

Security and Performance Balance

The one-hour Anthropic cache TTL enhances data freshness. It complies with NIST guidelines for ephemeral storage. Benchmarks show latency increases of at most 5%. Enterprises trade this for 20% cost reductions.

CrowdStrike notes fewer cache-based vulnerabilities. One bank's hourly compliance scans detected zero breaches post-update. This bolsters trust in cloud AI for sensitive financial data.

Future of Dynamic AI Caching

AI clouds evolve toward dynamic caching. Anthropic filed a USPTO patent on March 10, 2026, for query-pattern-based TTL algorithms. Enterprises blend on-prem H100 GPUs with cloud bursting for hybrid savings.

The Anthropic cache TTL cut establishes an industry benchmark. Firms preserve margins amid volatility. They scale AI operations without massive hardware investments, positioning for sustained growth.